.png)

The Competitive AI Radar - Understanding How Your Brand Compares to Others

If AI is already answering questions about your category (which it definitely is), then your brand is also being evaluated alongside your competitors.

When someone asks ChatGPT, Gemini, or Perplexity who the best options are in a given space, those tools don’t just look for a single relevant brand. They weigh signals across the entire field: who shows up most often, who gets cited, and who sounds credible enough to recommend.

That’s the reality most brands are stepping into without a clear enough read on where they stand. Find out if your brand is ready with our AI Discovery Health Check.

Which is why, at Edgar Allan, we use a Competitive AI Radar to make that positioning more visible and easier to address.

AI visibility only matters in context

A lot of conversations around AEO start with a simple question: “Do we show up in AI search?”

That question doesn’t get you very far.

The more important question is where you show up relative to the brands you compete with.

AI systems are comparative by design. They surface answers, not options. If another brand is clearer, better cited, or more consistently referenced for high-intent prompts, that brand becomes the default. That’s the impact of AEO.

The Competitive AI Radar gives us more than just theory. It provides a snapshot of that competitive reality as a measurable moment in time.

What the Competitive AI Radar shows

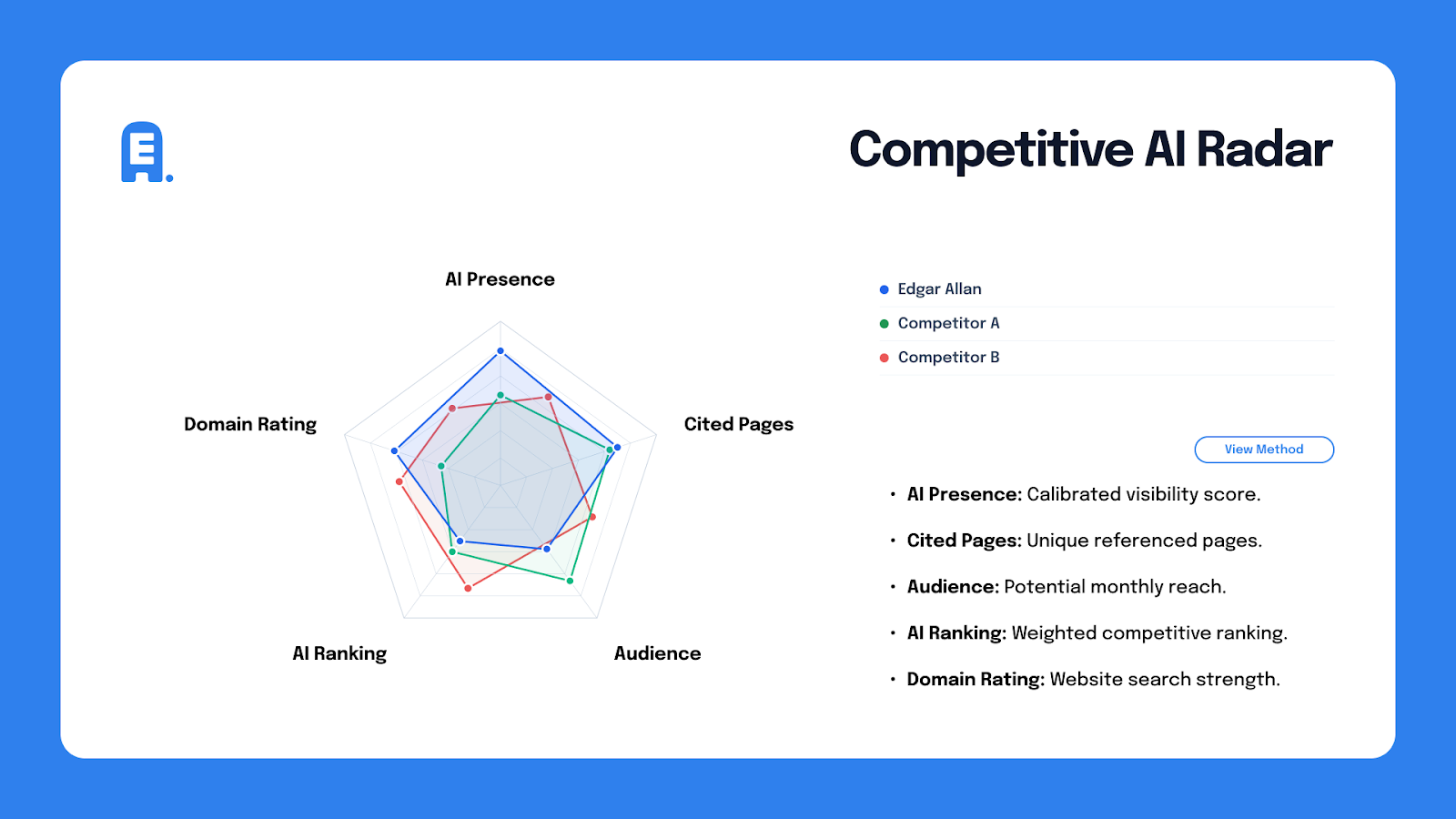

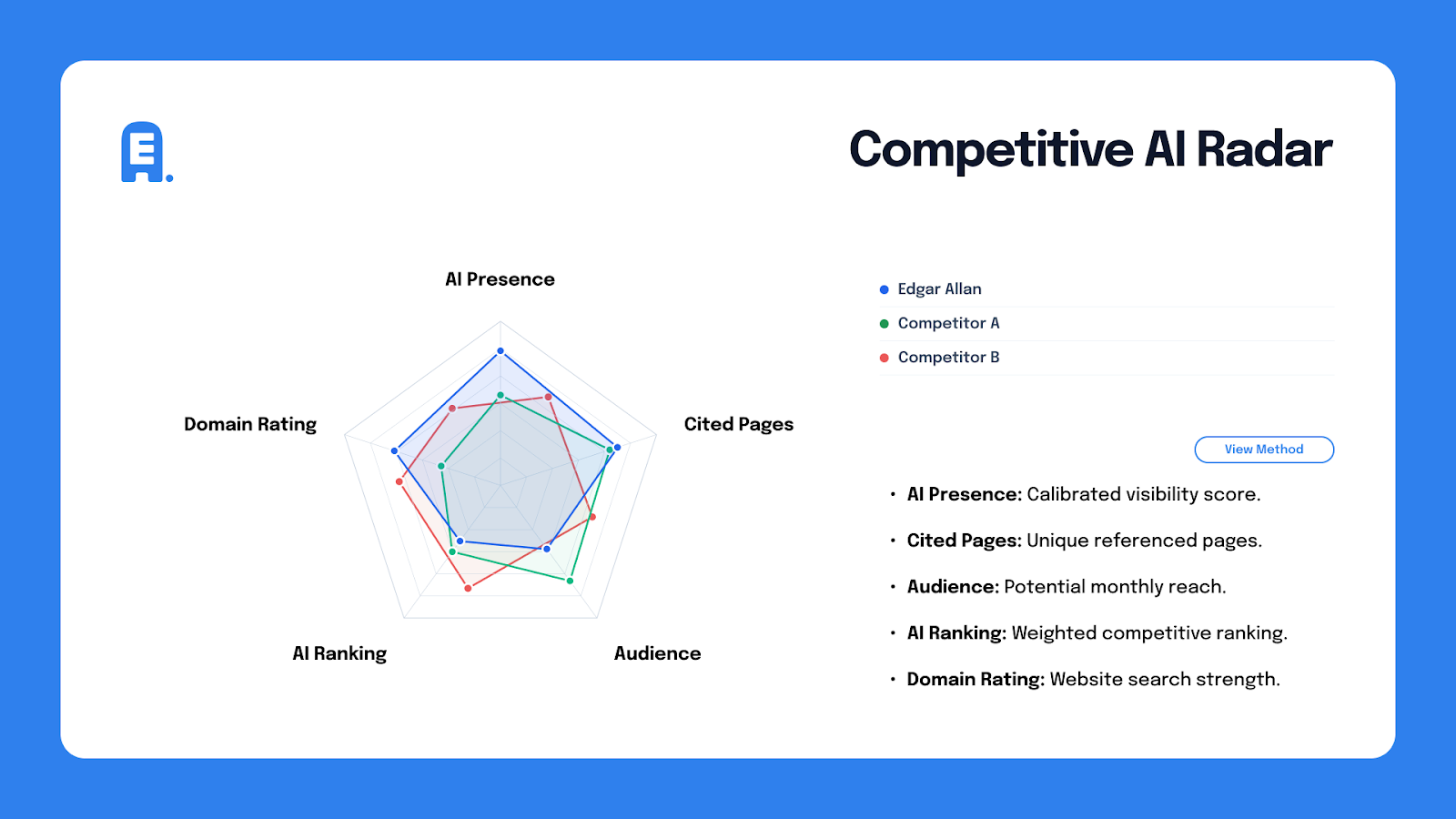

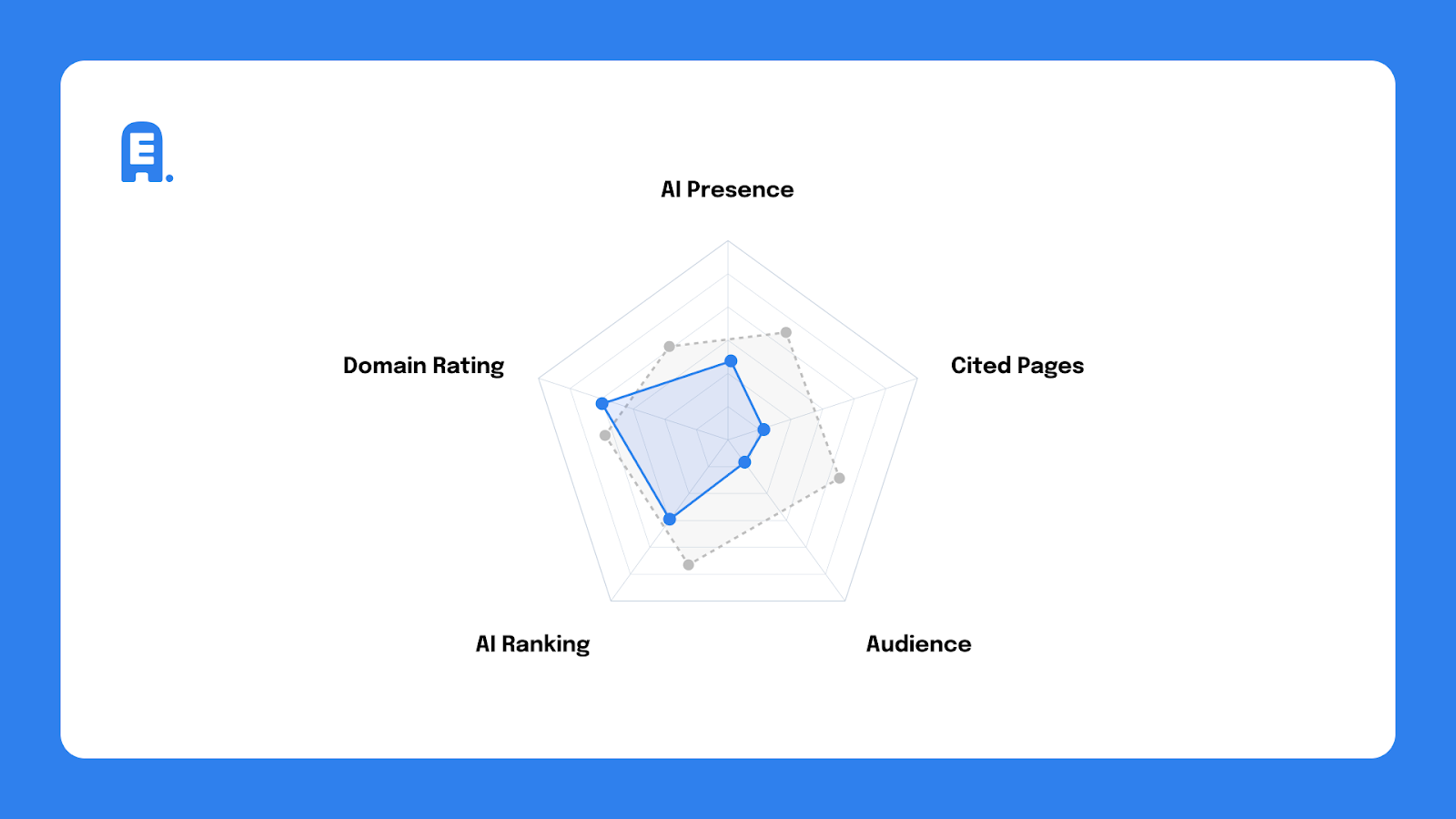

The Radar plots five metrics that together reflect how a brand performs across modern AI tools like ChatGPT, Gemini, and Perplexity.

Visualized as a pentagon, each metric represents a different pressure point. When one collapses, it pulls the whole shape inward. But when they’re aligned, performance compounds.

Because the tool is interactive, we can analyze multiple brands at once and overlay an industry average to see where a brand is leading, lagging, or over-relying on a single strength.

For example, we often see radar graphs where a brand’s SEO authority looks solid, but cited pages and AI audience are underdeveloped. The takeaway is pretty clear: that site has authority, but not enough content that AI systems can confidently reference.

And that’s the level of clarity the Radar is designed to surface.

What each of these metrics means

AI Presence (Visibility) Score

A calibrated 0–100 score reflecting how frequently a brand appears in AI answers, weighted by citation depth and competitive rank. A one-off mention in a generic response isn’t the same as being consistently referenced as the answer. This score accounts for that difference.

Monthly AI Audience

An estimate of potential reach, calculated by multiplying prompt volume by brand mentions. This shows how often people are likely to encounter your brand through AI-driven queries and not just whether you appear at all.

Cited Pages

The number of unique pages from a brand’s domain that AI models reference as sources. This is a critical signal. If AI tools can’t point back to your content, they can’t rely on it. And when trust drops, so does visibility.

AI Ranking (Commercial Position)

A weighted score measuring how often and how highly a brand appears for high-intent, commercial prompts, like “top venture capital firms” or “best enterprise security platforms.”

This is where AEO stops being abstract and starts aligning with conversion and, ultimately, revenue.

Domain Rating (DR)

A baseline measure of a site’s overall search authority and backlink strength. It’s important to note that traditional SEO still matters here because strong AEO performance depends on a foundation that AI systems already recognize as authoritative.

Why imbalance matters more than any single source

Looking at these metrics in isolation can be misleading. The Radar is designed to show how they interact.

Patterns emerge quickly:

- A brand with a strong AI audience but low-cited pages is seen but not trusted.

- A brand with solid citations but weak SEO authority is doing the right content work on an unstable base.

- A brand with good SEO but poor AI ranking is optimized for search engines, but not for answers.

Once the metrics are plotted together, these issues don’t need interpretation. They’re visible.

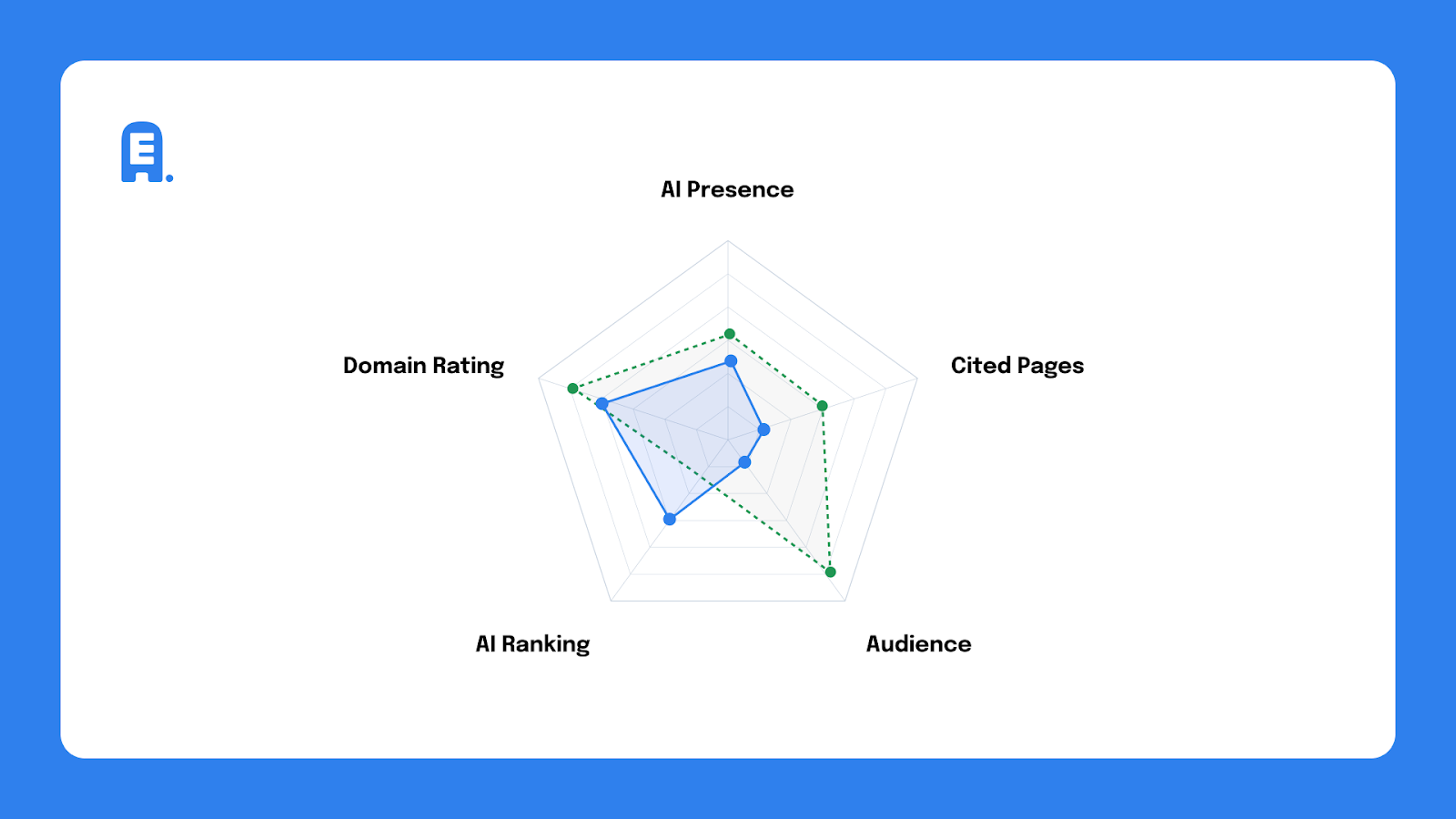

Competitive comparison makes the gaps clearer

The Competitive AI Radar becomes especially useful when benchmarks are layered in.

By plotting a brand against the industry average, we can immediately see where it’s underperforming in citation volume, audience reach, or commercial positioning. In many cases, the signals point to the same underlying issue: not enough high-quality, AEO-optimized content that AI systems are willing to reference.

In other cases, the limitation is more foundational. Weak SEO performance often caps how far AEO efforts can go, regardless of intent.

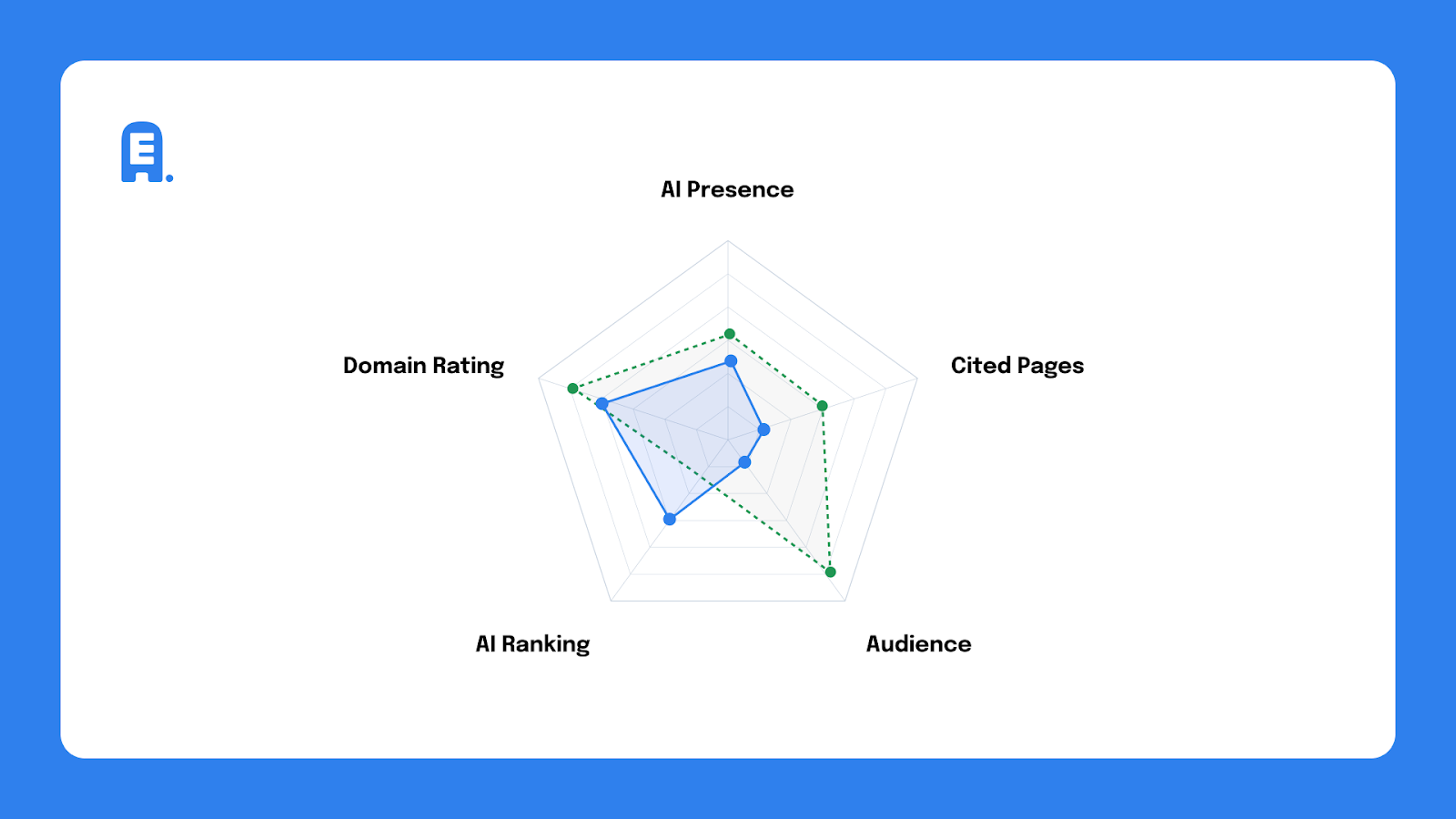

We can also compare two brands directly (client versus core competitor) to show which brand consistently wins AI visibility in key market segments. That comparison changes the conversation. It shifts the focus from abstract ambition to concrete gaps that can be closed.

How the data is gathered

This isn’t a one-off prompt test or surface-level scan.

- Authority and SEO signals are sourced from SEMrush.

- A proprietary crawl bot analyzes outputs from leading LLMs.

- Testing runs against a stable list of high-intent commercial prompts to determine whether a brand is treated as “the answer.”

- All results are calibrated against an industry average to contextualize citation volume and audience size.

The result is a grounded view of where a brand stands right now and not where it hopes to be.

What to do once you see it

The Competitive AI Radar isn’t a scorecard to pin up for kudos. It’s a diagnostic tool that shows where performance breaks down and why.

Once the gaps are visible, what should come next is pretty clear. You do one or a combination of things:

- Strengthen content that AI systems can cite with confidence.

- Address SEO weaknesses that limit AEO performance.

- Focus on prompts tied to real buying intent and not vanity visibility.

- Close the specific gaps competitors are exploiting in AI search results.

Your brand is already out there, and an AI tool is already shaping how it’s perceived. The only question now is whether that perception is intentional or left to chance. If you want help making your brand AI-ready, we can help.

Read more from the Edgar Allan Blog.

- What AI Search Actually Rewards: Expertise, POV, and Saying Something Real

- Machines Are Reading Your Website (And They’re Probably Getting Your Brand All Wrong)

- Machines Are Reading Your Website (And They’re Probably Getting Your Brand All Wrong) Part 2

- From Discovery to Decision: How AEO Changed the CRO Playbook

AI visibility only matters in context

A lot of conversations around AEO start with a simple question: “Do we show up in AI search?”

That question doesn’t get you very far.

The more important question is where you show up relative to the brands you compete with.

AI systems are comparative by design. They surface answers, not options. If another brand is clearer, better cited, or more consistently referenced for high-intent prompts, that brand becomes the default. That’s the impact of AEO.

The Competitive AI Radar gives us more than just theory. It provides a snapshot of that competitive reality as a measurable moment in time.

What the Competitive AI Radar shows

The Radar plots five metrics that together reflect how a brand performs across modern AI tools like ChatGPT, Gemini, and Perplexity.

Visualized as a pentagon, each metric represents a different pressure point. When one collapses, it pulls the whole shape inward. But when they’re aligned, performance compounds.

Because the tool is interactive, we can analyze multiple brands at once and overlay an industry average to see where a brand is leading, lagging, or over-relying on a single strength.

For example, we often see radar graphs where a brand’s SEO authority looks solid, but cited pages and AI audience are underdeveloped. The takeaway is pretty clear: that site has authority, but not enough content that AI systems can confidently reference.

And that’s the level of clarity the Radar is designed to surface.

What each of these metrics means

AI Presence (Visibility) Score

A calibrated 0–100 score reflecting how frequently a brand appears in AI answers, weighted by citation depth and competitive rank. A one-off mention in a generic response isn’t the same as being consistently referenced as the answer. This score accounts for that difference.

Monthly AI Audience

An estimate of potential reach, calculated by multiplying prompt volume by brand mentions. This shows how often people are likely to encounter your brand through AI-driven queries and not just whether you appear at all.

Cited Pages

The number of unique pages from a brand’s domain that AI models reference as sources. This is a critical signal. If AI tools can’t point back to your content, they can’t rely on it. And when trust drops, so does visibility.

AI Ranking (Commercial Position)

A weighted score measuring how often and how highly a brand appears for high-intent, commercial prompts, like “top venture capital firms” or “best enterprise security platforms.”

This is where AEO stops being abstract and starts aligning with conversion and, ultimately, revenue.

Domain Rating (DR)

A baseline measure of a site’s overall search authority and backlink strength. It’s important to note that traditional SEO still matters here because strong AEO performance depends on a foundation that AI systems already recognize as authoritative.

Why imbalance matters more than any single source

Looking at these metrics in isolation can be misleading. The Radar is designed to show how they interact.

Patterns emerge quickly:

- A brand with a strong AI audience but low-cited pages is seen but not trusted.

- A brand with solid citations but weak SEO authority is doing the right content work on an unstable base.

- A brand with good SEO but poor AI ranking is optimized for search engines, but not for answers.

Once the metrics are plotted together, these issues don’t need interpretation. They’re visible.

Competitive comparison makes the gaps clearer

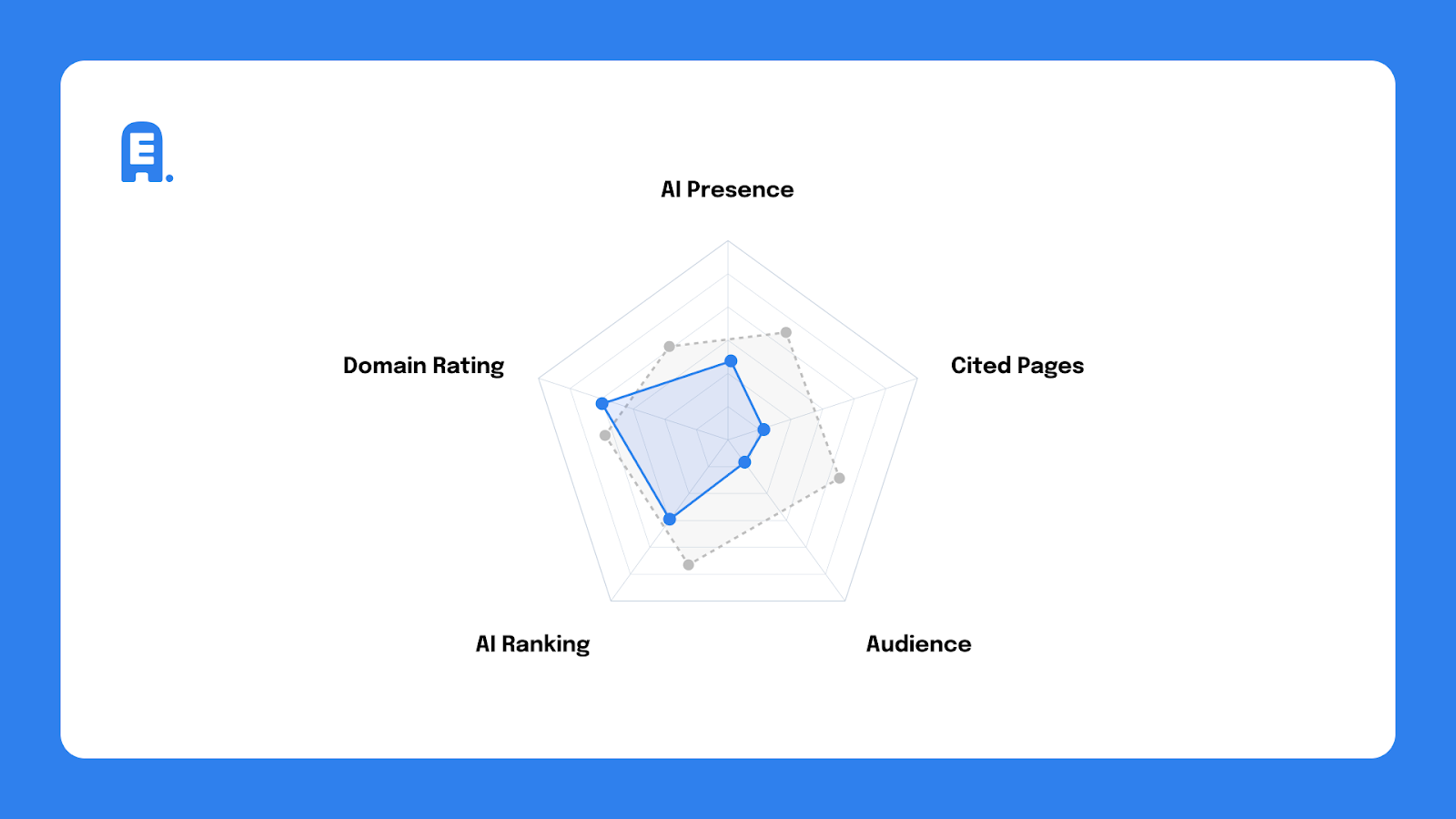

The Competitive AI Radar becomes especially useful when benchmarks are layered in.

By plotting a brand against the industry average, we can immediately see where it’s underperforming in citation volume, audience reach, or commercial positioning. In many cases, the signals point to the same underlying issue: not enough high-quality, AEO-optimized content that AI systems are willing to reference.

In other cases, the limitation is more foundational. Weak SEO performance often caps how far AEO efforts can go, regardless of intent.

We can also compare two brands directly (client versus core competitor) to show which brand consistently wins AI visibility in key market segments. That comparison changes the conversation. It shifts the focus from abstract ambition to concrete gaps that can be closed.

How the data is gathered

This isn’t a one-off prompt test or surface-level scan.

- Authority and SEO signals are sourced from SEMrush.

- A proprietary crawl bot analyzes outputs from leading LLMs.

- Testing runs against a stable list of high-intent commercial prompts to determine whether a brand is treated as “the answer.”

- All results are calibrated against an industry average to contextualize citation volume and audience size.

The result is a grounded view of where a brand stands right now and not where it hopes to be.

What to do once you see it

The Competitive AI Radar isn’t a scorecard to pin up for kudos. It’s a diagnostic tool that shows where performance breaks down and why.

Once the gaps are visible, what should come next is pretty clear. You do one or a combination of things:

- Strengthen content that AI systems can cite with confidence.

- Address SEO weaknesses that limit AEO performance.

- Focus on prompts tied to real buying intent and not vanity visibility.

- Close the specific gaps competitors are exploiting in AI search results.

Your brand is already out there, and an AI tool is already shaping how it’s perceived. The only question now is whether that perception is intentional or left to chance. If you want help making your brand AI-ready, we can help.

Read more from the Edgar Allan Blog.

- What AI Search Actually Rewards: Expertise, POV, and Saying Something Real

- Machines Are Reading Your Website (And They’re Probably Getting Your Brand All Wrong)

- Machines Are Reading Your Website (And They’re Probably Getting Your Brand All Wrong) Part 2

- From Discovery to Decision: How AEO Changed the CRO Playbook